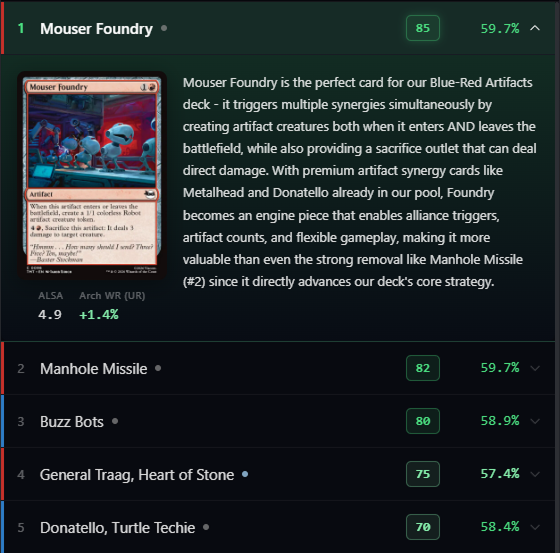

Before the advent of the current power of LLMs, I worked on several AI products such as Google Lens - and one of the key things I always tried to target were use-cases where there was no real failure state. For example, if you show shopping results that look similar to the picture you took of an outfit, as opposed to trying to give them the exact clothing, then even if you are directionally correct you haven't failed. My thinking on that has changed with current AI products and capabilities.

You can now be more ambitious in delivering specifics to your end users - but your product WILL fail sometimes. The LLM will hallucinate, it will go off the rails, it will say 2+2=Apple. Your goal is obviously to guide it to states and circumstances where it fails less, but there's no way to avoid it entirely. You need to ensure that there are escape hatches for users, resets, and importantly, ways to get your AI product back on track. Failure cases are not edge cases, they are a primary use case you have to consider with the current state of tech.